SLV Adds Benchmark Tool for Solana RPC / Geyser gRPC / Shredstream — Region-Aware Filtering Reveals Bottlenecks in Globally Distributed Infrastructure

SLV Adds Benchmark Tool for Solana RPC / Geyser gRPC / Shredstream — Region-Aware Filtering Reveals Bottlenecks in Globally Distributed Infrastructure

ELSOUL LABO B.V. (Headquarters: Amsterdam, Netherlands; CEO: Fumitake Kawasaki) and Validators DAO have added a benchmark tool to SLV, the open-source Solana development tool, for comparing Solana RPC, Solana Geyser gRPC, and Solana Shredstream performance side by side.

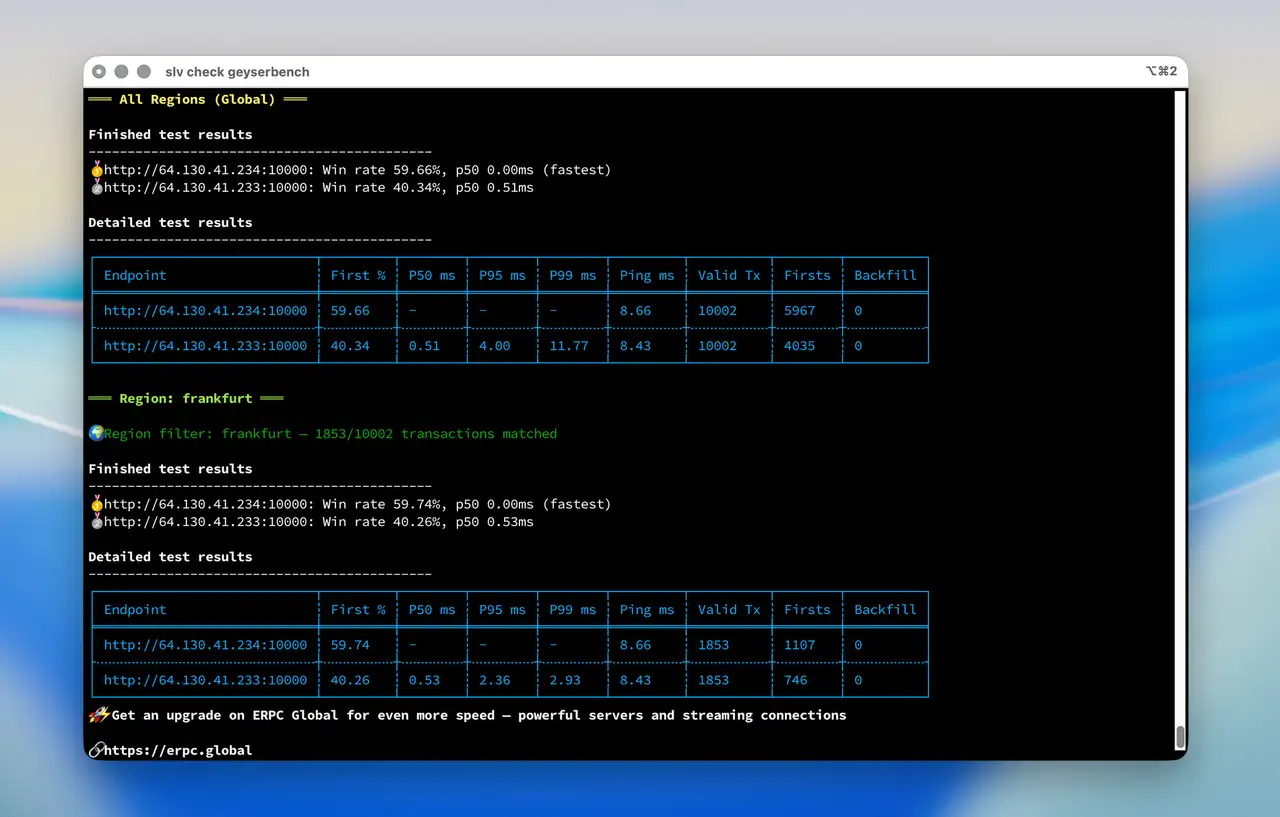

The key differentiator is region-aware filtering. While conventional benchmark tools compare aggregate results across all slots, SLV's benchmark tool outputs both a global (all-region) result and a filtered result isolating only data originating from validator leaders in a specific region. It launches with a single command and supports both interactive and flag-based execution.

For developers and traders pursuing ultra-low latency, being able to more accurately assess their infrastructure's true performance within their own region fundamentally changes how they select environments and design architectures.

SLV Website: https://slv.dev/en

Speed Comparison Documentation: https://slv.dev/en/doc/geyser-grpc/speed-comparison/

Why Region-Aware Measurement Matters — A Structural Challenge of Globally Distributed Blockchains

Solana's block production rotates the validator leader across the globe on short intervals. One slot may have a leader in Frankfurt, the next in Tokyo, and the one after that in New York — the leader's geographic location is constantly shifting.

Conventional benchmark tools aggregate data across all slots. This is a valid approach for general evaluation, but it has structural limitations when pursuing ultra-low latency.

When a server in Frankfurt receives data generated by a validator leader in Tokyo, propagation delay due to physical distance is unavoidable. This delay is a geographic handicap that occurs regardless of server or network quality. When all slots are measured together, this geographic handicap contaminates the numbers, and the true performance within your own region gets buried in noise.

SLV's benchmark tool solves this problem. By separating the global result from the result filtered to a specific region's validator leaders, it enables infrastructure evaluation that accounts for geographic conditions.

Two Perspectives That Change Strategy

The all-region result shows overall response quality against leaders everywhere. For dApps with a global user base, or use cases that require stable responses regardless of leader location, this metric matters most.

The region-specific result shows your true performance against leaders where your server has a geographic advantage. For high-frequency trading and arbitrage, where millisecond-level advantages directly determine outcomes, what matters is how fast you can reach data when the leader is in your own region.

Which metric to prioritize depends on use case and strategy. What matters is that data which was previously visible only as a single aggregate can now be evaluated separately. For example, when switching providers, you can determine not just the change in overall win rate, but whether performance improved or degraded specifically against leaders in a particular region. This substantially improves the precision of infrastructure decisions.

Solana RPC / Geyser gRPC / Shredstream — All Three Communication Layers Covered

SLV's benchmark tool covers all three major communication layers of Solana infrastructure.

Solana RPC is the interface through which every Solana application accesses the network. Solana Geyser gRPC provides real-time streaming of on-chain data, used for price feeds and account state monitoring. Solana Shredstream receives block data fragments directly from the leader, offering the lowest-latency data path available.

Each of these three layers can be compared across both all-region and region-specific views. Which layer has a bottleneck, which region shows a performance gap — a single tool gives you visibility across the board.

A Measurement Foundation That Comes Alive with SLV's AI Agents

The benchmark tool is useful on its own, but when combined with SLV's AI agent environment, it connects measurement to improvement in a single workflow.

SLV is an AI Agent Kit covering the full lifecycle of Solana development. It includes purpose-built specialist agents: Cecil for validator operations, Tina for indexed Solana RPC node management, Cloud for Solana Geyser gRPC streaming configuration, and Setzer for Solana app development. When you make a request in natural language through the AI Console, it is automatically routed to the appropriate specialist agent.

The benchmark tool is MCP (Model Context Protocol) enabled, so AI agents can execute measurements and read the results directly. If a measurement reveals a performance gap in a specific region, server procurement, node migration, and configuration changes can all be carried out within the same SLV environment. Server procurement is already supported through SLV Metal, and zero-downtime node migration is fully implemented. Because the benchmark tool, migration tool, and tuning tools all share the same MCP foundation, AI agents can combine them to help identify bottlenecks and drive environment improvements.

Operating Solana nodes has traditionally required deep Linux expertise, CLI proficiency, and version management judgment. SLV's AI agents take on the bulk of this cognitive load. With the addition of the benchmark tool, AI agents now cover the starting point of the operational cycle — current-state measurement — enabling the full measure → decide → act → re-measure cycle to be driven through natural language.

Local Mode Support — SSH In and Benchmark on the Spot

SLV supports local mode in addition to remote management. You can SSH into a node and run SLV directly on that machine.

The benchmark tool works in local mode as is. You can run measurements directly on a live node, review results, and work with the AI agent to drive improvements as needed. No remote management node is required, so the setup is simple and you can assess your node's performance immediately.

Environments built in local mode can be gradually migrated to Ansible-powered remote management as the project grows. Start with one machine and scale when you need to — SLV's design philosophy is consistent across the benchmark tool as well.

Key Data for High-Frequency Trading Decisions

Solana is a globally distributed blockchain. The fact that block production rotates among validators worldwide demands more complex judgment from high-frequency traders and bots than simple latency numbers alone can provide.

Which region's leaders give your setup an advantage. Which layer shows the gap. How does win rate in a specific region change when you switch providers — SLV's benchmark tool provides the latency comparison, region-by-region comparison, and layer-by-layer comparison needed for these decisions, all in a single open-source tool.

Operational Expertise Accumulated in SLV, Released as Open Source

SLV's benchmark tool, like all other SLV features, is provided as open source.

SLV is an open-source tool that enables Solana validator and Solana RPC node setup through daily operations to be carried out primarily through natural-language interaction with AI agents in a no-code workflow. It covers deployment, upgrades, downgrades, zero-downtime migration, server procurement, Solana Geyser gRPC configuration, and Solana app development — spanning the full lifecycle of Solana development. The benchmark tool adds current-state measurement as a starting point to this operational foundation.

The features in SLV, including this benchmark tool, reflect expertise gained from real-world operations on the ERPC platform. Optimization techniques and tuning parameters accumulated through operating the Epics DAO validator — which has reached world No. 3 among all Solana validators — and through feedback from users in over 100 countries, are published as open source through SLV.

Available free of charge to anyone looking to evaluate and compare their Solana operational environment.

SLV GitHub: https://github.com/validatorsDAO/slv

Five Consecutive Years of WBSO Approval

ELSOUL LABO has received approval under the Dutch government's WBSO R&D incentive program for five consecutive years since 2022. The company continues research and development on ultra-low-latency Solana RPC infrastructure and automation of validator placement and operational orchestration, with results directly implemented in SLV's toolset.

Contact

For inquiries about SLV and ERPC, please create a support ticket on the Validators DAO official Discord.

Validators DAO Discord: https://discord.gg/C7ZQSrCkYR

Links

- SLV Website: https://slv.dev/en

- SLV Speed Comparison: https://slv.dev/en/doc/geyser-grpc/speed-comparison/

- SLV GitHub: https://github.com/validatorsDAO/slv

- ERPC Website: https://erpc.global/en

- Validators DAO Discord: https://discord.gg/C7ZQSrCkYR